Edge-to-Cloud Systems

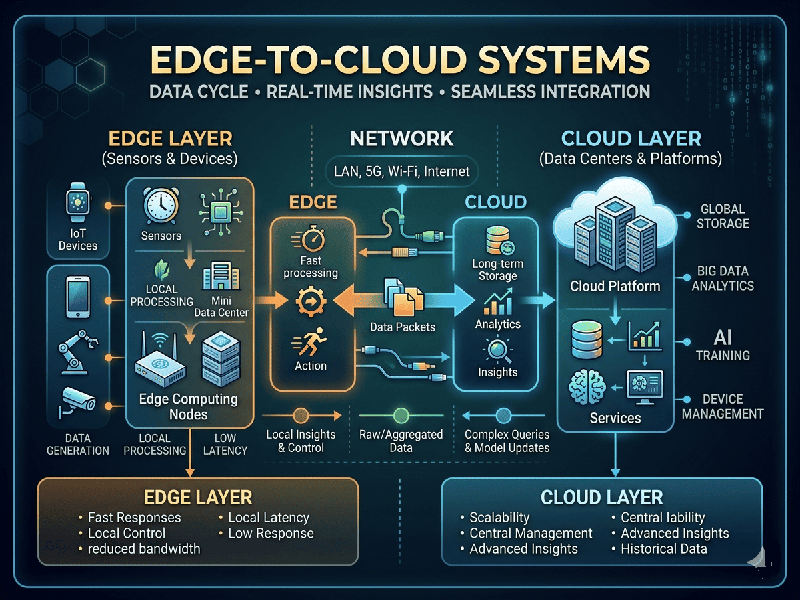

Edge-to-Cloud systems represent a distributed computing architecture where data processing is shared between local devices (the Edge) and centralized data centers (the Cloud). This eliminates the "all-or-nothing" approach, placing intelligence exactly where it is most effective.

The Technical Architecture (Functional Split)

This perspective focuses on the "plumbing"—how data

moves and where the computational heavy lifting happens based on latency and

bandwidth.

1. The Intelligent Edge (Real-Time Action)

- Low Latency Execution: Decisions that require

millisecond responses—like an autonomous forklift stopping for a human or

a manufacturing sensor detecting a vibration spike—happen at the Edge.

- Data Thinning: Instead of sending 24/7 raw 4K

video to the cloud, the Edge device only sends "event-based"

clips (e.g., when a specific object is detected), saving massive bandwidth

costs.

- Local Protocols: The Edge acts as a translator,

converting legacy industrial protocols (like Modbus or OPC-UA) into

cloud-friendly MQTT or JSON.

2. The Centralized Cloud (Big Picture Analysis)

- Model Training: While the Edge runs the

AI, the Cloud trains it. The Cloud aggregates data from thousands

of Edge points to refine machine learning models.

- Long-Term Archival: Data required for compliance,

historical audits, or multi-year trend analysis is pushed to

"cold" cloud storage.

- Global Orchestration: The Cloud acts as the command

center, pushing firmware updates and security patches to thousands of Edge

nodes simultaneously.