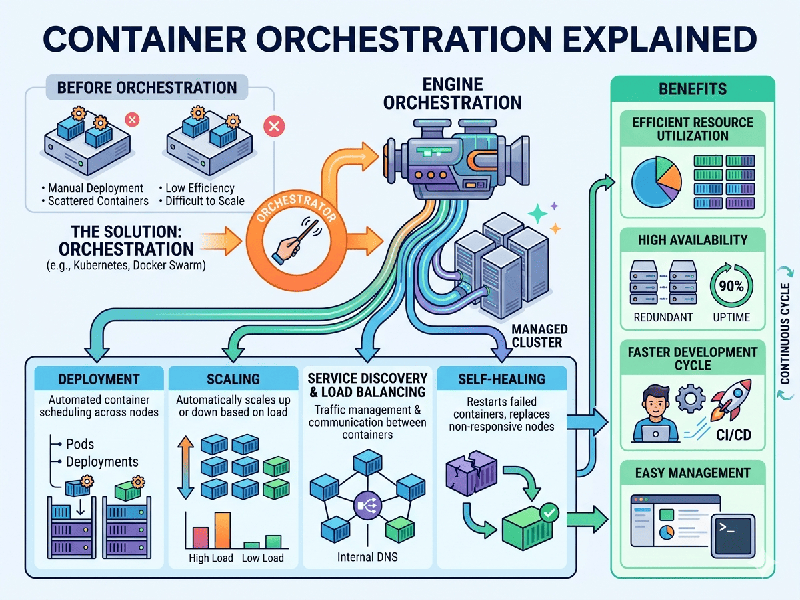

Container Orchestration Explained

Container

orchestration is the automated process of managing the lifecycle of software

containers in complex, dynamic environments. If a container is a single

performer, orchestration is the conductor ensuring the entire symphony

plays in harmony.

In a modern

microservices architecture, you might have hundreds or thousands of containers.

Managing them manually—starting, stopping, and networking them—is impossible at

scale.

The Core

Functions of Orchestration

An

orchestration platform (like Kubernetes or Docker Swarm) handles the

"heavy lifting" of operations:

- Provisioning & Deployment: Automatically pulling container

images and starting them on available hardware.

- Resource Allocation: Placing containers on the

best-suited servers based on available CPU and RAM.

- Scaling: Increasing or decreasing the

number of container instances based on traffic spikes or dips.

- Load Balancing: Distributing incoming web

traffic across multiple healthy containers to prevent any single one from

crashing.

- Self-Healing: If a container fails, the

orchestrator detects it and immediately restarts a new one to maintain the

"desired state."

- Service Discovery: Providing a stable internal DNS

so that different containers (e.g., a "Payment" service and a

"Cart" service) can find and talk to each other.

How It

Works: The "Desired State" Model

Orchestration

typically operates on a declarative model. Instead of telling the system

how to do something, you tell it what you want the final result

to be.

1.

The Configuration: You provide a YAML or JSON file stating: "I want 5 copies of the

'Billing' container running on Port 80."

2.

The Control Plane: The orchestrator reads this and checks the current status of the

cluster.

3.

The Reconciliation Loop: If it sees only 2 containers running, it automatically

triggers the deployment of 3 more to match your request.