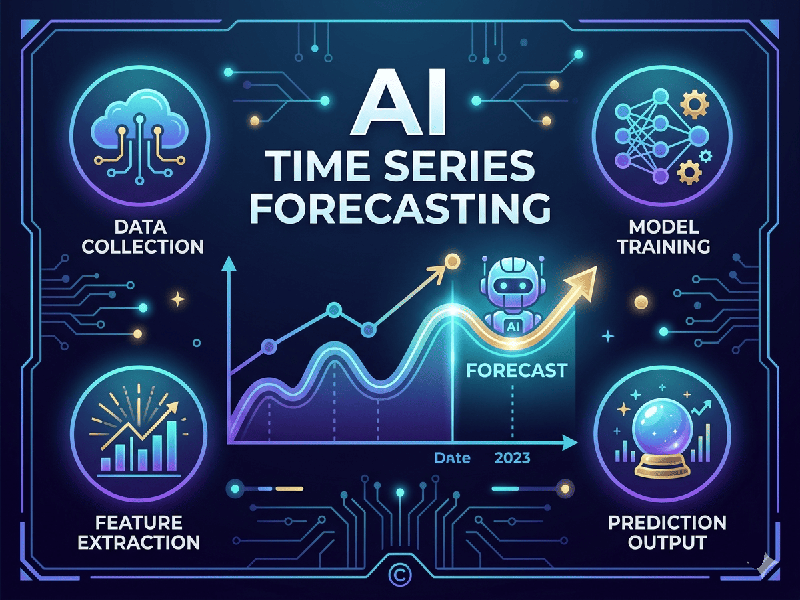

AI Time Series Forecasting

AI Time Series Forecasting involves using historical,

time-stamped data to predict future values. While traditional methods (like

ARIMA) dominated for decades, modern AI has introduced powerful deep learning

architectures and "foundation models" that can handle complex,

non-linear patterns much more effectively.

1. Key Components of Time Series

Before applying AI, it is crucial to understand what makes

your data "tick":

- Trend: The long-term direction of the

data (upward, downward, or flat).

- Seasonality: Repeating patterns at fixed

intervals (e.g., daily spikes in electricity usage or yearly holiday

shopping).

- Cycles: Fluctuations that are not at

fixed intervals (e.g., economic recessions).

- Irregularity/Noise: Random variations or

"black swan" events that cannot be predicted.

2. Machine Learning vs. Deep Learning

- Machine Learning (e.g.,

XGBoost): Often

faster and more interpretable. It treats forecasting as a regression

problem. It excels when you have many external variables (covariates) but

requires manual creation of "lags" (previous time steps).

- Deep Learning (e.g.,

Transformers/LSTMs): These models automatically learn "feature

representations" from the raw sequence. They are superior for massive

datasets with high complexity but require more computational power.

3. How to Evaluate Accuracy

You can't just use standard accuracy percentages. You need

metrics that measure the "distance" between the forecast and reality:

- MAE (Mean Absolute Error): The average of the absolute

differences between predicted and actual values. Easy to interpret (e.g.,

"we are off by $5 on average").

- RMSE (Root Mean Squared Error): Similar to MAE but penalizes

large errors more heavily. Use this if a "big miss" is much

worse than several "small misses."

- MAPE (Mean Absolute Percentage

Error): Shows

the error as a percentage. Great for comparing performance across

different products or scales.

- CRPS (Continuous Ranked

Probability Score): Used for Probabilistic Forecasting (when the model gives a

range/confidence interval instead of just one number).

4. Modern Workflow

1.

Stationarity Check: Determining if the data's mean/variance is constant. If not, you may

need to "difference" the data.

2.

Windowing:

Converting the time series into "windows" of input-output pairs

(e.g., use the last 30 days to predict the next 7).

3.

Backtesting:

Instead of a simple train-test split, you use a Rolling Forecast Origin

to simulate how the model would have performed at different points in the past.